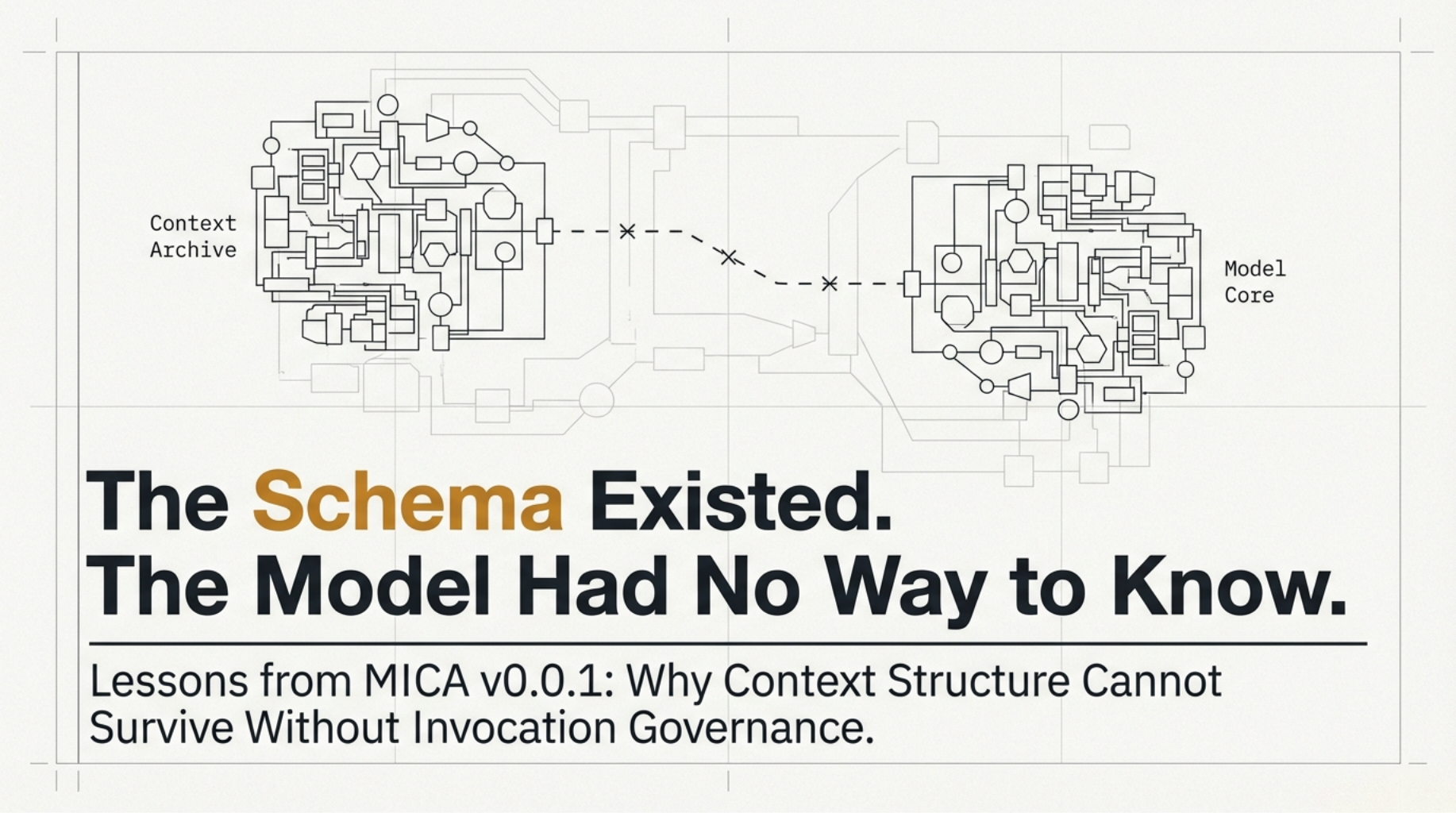

The Schema Existed. The Model Had No Way to Know.

v0.0.1 proved that context could be structured. It did not prove that the structure could govern what shaped the session. Three failures — and why only one made the others meaningless.

Series

MICA SeriesPart 2 of 2

Glossary: terms used in this article

🔸 MICA (Memory Invocation & Context Archive): A governance schema for AI context management. Defines how context should be structured, trusted, scored, and handed off across sessions.

🔸 Session Loss: The architectural characteristic of LLMs where no information persists between independent conversations. Not a bug. A design property with real engineering consequences for long-running projects.

🔸 Trust Class: The reliability classification of a context item's source. In this series:

canonical (repo truth), distilled (summarized from sessions), raw (unprocessed session output), symbolic (reference only).🔸 Invoke Role: A governance label that defines a context item's eviction behavior. In this series:

anchor (never evict), bridge (preserve across phases), hint (drop first under pressure), none (drop immediately).🔸 Invocation: The mechanism by which a MICA archive reaches an AI session. Without explicit invocation, the archive exists but has no effect on the session. Formalized as a required field in v0.1.8.

🔸 Admission Gate: The point at which a context item is evaluated for inclusion. Decides what goes in — before output validation begins.

1. What v0.0.1 Was

Part 1 established the problem: session loss is not an inconvenience. For long-running projects, it is a structural failure. The standard responses — longer prompts, RAG, session summaries, flat memory exports — all treat context as a document to be read, not a governed structure with authority levels, eviction rules, and provenance.

v0.0.1 was the first attempt at a specification-level answer.

It proved that context could be structured. It did not prove that the structure could govern what context is allowed to shape the session. Those are different claims. v0.0.1 satisfied the first and failed the second — in three distinct ways.

Only one of those failures made the other two meaningless.

2. A Different Layer

While working through the problem, I was reading broadly. arXiv papers on memory management. Dev.to articles on LLM reliability. Community discussions about what people had tried and where it broke.

One article in particular was useful: Why Asking an LLM for JSON Isn't Enough. In that article and the discussion it generated, a clear framing had emerged:

Treat the LLM as an unreliable upstream service. Add schema, validation, retry, fallback.

The operative mental model was this:

This framing is correct, and useful, for what it solves: defensive parsing and output reliability. None of it addressed admission control — denying a context item before it reached the model at all.

The question I needed to answer was structurally different:

The first asks "how do I handle what comes out?" The second asks "what goes in, and under what rules?"

MICA is not a replacement for output validation. It is the upstream governance layer that decides what is allowed to shape the session before output validation even begins.

3. Three Failures in v0.0.1

Failure 1: No defined semantics for scoring.

These were hardcoded test values. They existed to confirm that the pipeline could apply differential weights at all — not to define what those weights should be.

The problem was not that the numbers were heuristic. The problem was that the heuristics had no defined semantics — no defined rule explained how they produced a score.

There was no combination rule.

No output range.

No normalization.

What

canonical_memory_bonus: 0.15 meant relative to raw_logs_penalty: 0.20, how those four numbers combined into a final score, what the result represented — none of that was specified.A conforming implementation was not possible. That means two tools claiming to implement v0.0.1 could rank the same archive differently and both still claim compliance.

Failure 2: Invariants encoded as comments, not constraints.

v0.0.1:

A plain list of strings. No

id. No severity. No track. They were written as constraints, but encoded as comments. The difference matters: a constraint has enforcement. A note does not. These strings could not be machine-evaluated, could not be diff-checked against session behavior, and could not be reliably extracted by a model reading the archive.What a machine-actionable invariant requires:

Without

id, an invariant cannot be referenced in audit output. Without severity, a violation cannot be triaged. Without track, enforcement has no defined point of application. v0.0.1 had none of these.Failure 3: No path to the model.

There was no

invocation_protocol. No session-start procedure. No confirmation that the archive had been loaded. The model would begin a session with no instruction to locate the archive, no way to confirm it had been read, and no defined behavior for what to do at session start.The archive existed. The model had no reliable way to know it existed.

A new session could start, answer confidently, and never once acknowledge the archive it was supposed to be governed by.

4. Why Failure 3 Made the Others Meaningless

The three failures are not equal.

- Failure 1 is a calibration problem. The formula can be fixed. The weights can be justified. That work happened in subsequent versions.

- Failure 2 is a schema design problem. Fields can be added. Structure can be enforced. That work happened in the same range.

- Failure 3 is different in kind. It is not a field that needs to be added. It is a requirement that the schema must define how it reaches the model at all. A context item classified as

anchorwitheviction_priority: 3has no enforcement if the model never sees the archive. F

ailures 1 and 2 describe a schema that could not govern correctly. Failure 3 describes a schema that could not govern at all.

A context system does not fail only when it forgets. It also fails when it remembers without governance — and v0.0.1 could not even guarantee the second.

Every version from v0.1.0 through v0.1.7 addressed the first two failures. Scoring became implementable. Invariants gained structure. Eviction became a five-phase strategy. Error handling was defined.

The invocation problem was not formally addressed until v0.1.8, which introduced

invocation_protocol as a required field. It declares how the archive reaches an AI session, what pattern is used, and what the session opening report must contain.That is the distance between v0.0.1 and v0.1.8.

5. What This Series Covers Next

- Part 1 defined the problem.

This part documented v0.0.1 — what it was, what it got wrong, and why the most fundamental failure took the longest to fix.

- Part 3 covers v0.1.0 through v0.1.5: how scoring moved from hardcoded guesses to an implementable formula, what the eviction strategy revealed about context budget assumptions, and what was still missing at v0.1.5.

The series continues only where there is something concrete to specify, test, or correct.

Share

Continue the series

View all in seriesPrevious in MICA Series

My LLM Kept Forgetting My Project. So I Built a Governance Schema.

Series continuation

This is currently the latest published entry.